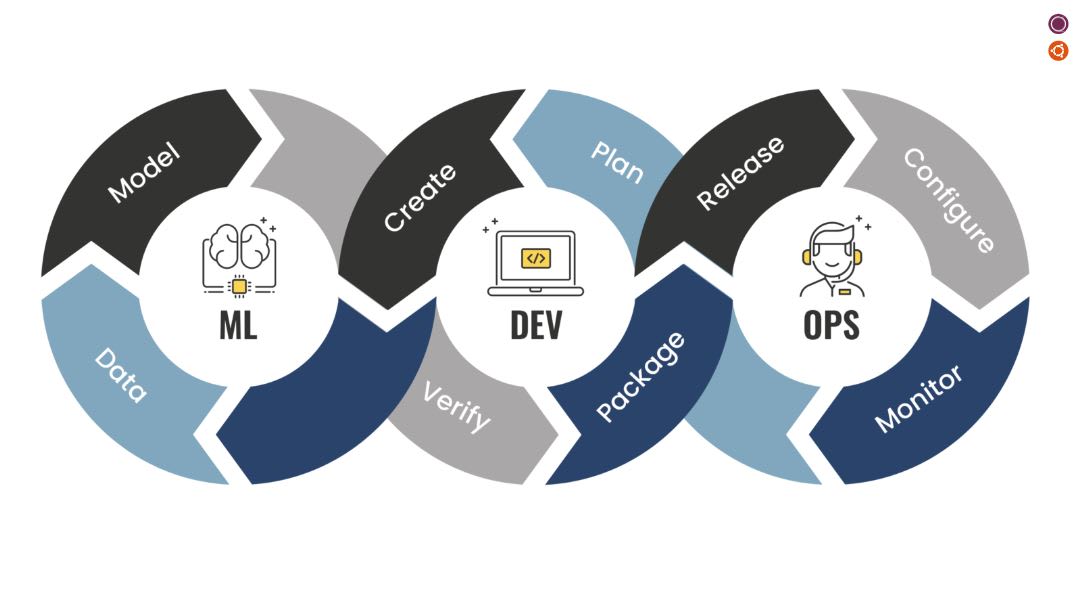

MLOps does not require a heavyweight platform from day one. It requires repeatable workflows and clear ownership.

Most growing teams get stuck because they can train models but cannot operate them reliably after launch.

Start with a minimal but complete loop

Your first MLOps stack should support:

- data and model version tracking

- reproducible training pipelines

- staged deployment (canary or shadow)

- monitoring for drift, quality, and latency

If one part is missing, incidents become hard to diagnose.

Define ownership by stage

- Data owners manage quality SLAs

- ML engineers own training + evaluation artifacts

- Platform team owns deployment reliability

- Product owners define business guardrail metrics

This cross-functional model prevents “works on my notebook” production failures.

Monitoring that matters

Track model and business health together:

- prediction confidence shift

- feature distribution drift

- end-to-end latency

- business KPI movement tied to model output

Alerting should include runbook links and clear escalation paths.

Scale gradually

As complexity grows, add automation in layers:

- automated retraining triggers

- policy checks before release

- incident retrospectives feeding into pipeline improvements

The first win in MLOps is operational clarity. The second is compounding reliability.

Explore related services

If this topic matches your roadmap, these service areas are a good next step.